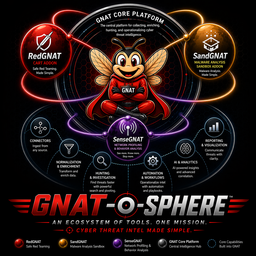

RedGNAT

Continuous Automated Readiness Testing

Validate detections. Confirm coverage. Automate adversary emulation safely.

You assume your detections work. You rarely verify.

- Detection rules are written and never tested against real adversary behavior

- Red team exercises happen once a year — your environment changes weekly

- A rule that was working last quarter may be broken after a SIEM update, a log source change, or a tuning tweak

- Threat intel identifies adversary TTPs — but confirms nothing about your ability to detect them

The readiness gap

Point-in-time testing gives you a readiness snapshot. Continuous testing gives you a readiness state. These are fundamentally different.

CART — Continuous Automated Readiness Testing

- Continuous — validation runs on a schedule, not on a calendar. Your readiness state is always current.

- Automated — emulation runs are defined once, executed by the platform. No manual red team engagement required per run.

- Readiness — the output is a readiness state: which techniques fire which detections, which have gaps, which regressed.

- Bounded — explicit safety boundaries prevent unintended impact. Emulation is controlled, scoped, and auditable.

Controlled emulation. Explicit boundaries.

- Scoped targets — emulation runs against declared targets only. No lateral movement beyond the defined scope.

- Technique allowlist — each run declares exactly which ATT&CK techniques will be executed. Nothing outside the list.

- No live payloads — emulation tests detection coverage without deploying real malicious capability

- Full audit trail — every action is logged, timestamped, and tied to the originating run

- Integration with change management — runs can be gated behind approval workflows before execution

Automation without chaos

The safety boundary model is what makes CART viable for continuous use. The emulation is aggressive enough to test real detection capability, and contained enough to run without a red team standing by.

Triggered by GNAT. Results back to GNAT.

From detection rules to full kill chain coverage

Detection Rules

Does a specific SIEM rule, EDR detection, or network signature fire when the technique is executed?

Alert Fidelity

When the detection fires, does the alert contain enough context for an analyst to act on it? Or just a true positive with no signal?

Coverage Gaps

Which ATT&CK techniques in the current threat actor profile have no detection coverage? Surface the gaps before they're exploited.

Regression Detection

Which detections that passed last week are failing now? Change-driven regression caught before the next real incident.

Kill Chain Coverage

Map detection coverage across the full ATT&CK kill chain. See where the chain is covered and where adversaries can operate undetected.

TTP-Specific Validation

New campaign TTP intel triggers targeted validation of your detection capability for exactly those techniques.

From detonation findings to validated detection

SandGNAT → RedGNAT

SandGNAT detonates a suspicious artifact and maps behaviors to ATT&CK techniques. RedGNAT takes those specific techniques and runs a targeted validation — does your detection stack catch what this sample actually does?

The validation loop

This closes the loop from artifact to coverage. You don't just know the sample is malicious — you know whether your defenses would catch it in the wild.

Stop assuming. Start knowing.

- Rules are continuously validated — regressions surface immediately, not at the next annual exercise

- New TTP intel from GNAT investigations automatically triggers validation runs for relevant techniques

- Coverage gaps are mapped against the ATT&CK framework — you can see where you're blind

- Alert fidelity validation confirms detections don't just fire — they fire with usable context

- Validation results are STIX objects — they can be queried, trended, and reported like any other intelligence

A readiness state, not a readiness assumption

- Continuous evidence — readiness is a live state, not a point-in-time report from 8 months ago

- Risk quantification — coverage gaps are visible and trackable, not theoretical

- Change impact — SIEM updates, log source changes, and tuning decisions show their detection impact immediately

- Audit trail — every validation run is logged. Regulatory and compliance narratives are supported by evidence.

The board-level argument

When asked "could you detect this attack?" the answer with CART is a data-backed one — validated this week against your actual environment — not an assumption based on when the rule was written.

Getting started with RedGNAT

- Have GNAT core deployed with the workflow engine active

- Deploy RedGNAT and configure target scope and safety boundaries

- Define your first validation run — pick 3–5 high-priority ATT&CK techniques relevant to your current threat model

- Review results: detected, missed, partial. Fix or accept gaps explicitly.

- Configure scheduled runs and event-driven triggers as confidence grows